The $200K Demo That Never Shipped

A financial services firm hired a consulting company to build an AI-powered document classifier. The POC took 8 weeks and cost $120,000. In the demo, it classified loan applications with 94% accuracy. The CFO approved the production rollout. Six months and another $180,000 later, the project was shelved. The classifier worked on the 500 sample documents they tested with. It failed on real documents that had handwritten notes, scanned at odd angles, or used forms from before 2019.

McKinsey's 2025 data shows that two-thirds of organizations with AI pilots haven't moved them to production. Only 1 in 5 AI investments delivers measurable ROI. The demo works. The production system doesn't. And nobody planned for the gap between them.

Seven Reasons Your Pilot Died

We've watched pilots fail at companies of every size. The reasons cluster into the same seven patterns.

1. No Success Metric Defined Before You Started

The pilot was approved because "AI is important." Nobody wrote down what success looks like. After 8 weeks, the team demos the model. Stakeholders nod. Then someone asks: "So, is this working?" Nobody knows. There's no baseline to compare against. There's no target accuracy, speed improvement, or cost reduction that was agreed on before the build started.

Fix: Before writing a line of code, agree on one metric. "Process 80% of invoices without human intervention" or "Reduce quote turnaround from 3 days to 4 hours." Write it down. Put a number on it.

2. Built on Sample Data, Breaks on Real Data

The most common cause of pilot failure. Your sample dataset is clean, labeled, and representative of the easy cases. Real data has misspellings, missing fields, duplicate entries, edge cases nobody anticipated, and formats that changed three times in the last five years. A model that scores 95% on sample data might score 62% on production data.

A healthcare company we worked with built a claims routing model on 10,000 historical claims. Accuracy in testing: 91%. Accuracy on the first month of live claims: 67%. The difference? Their test data came from one office. Live data came from 14 offices, each with different coding practices and form versions.

Fix: Test on the ugliest data you have. Pull it from production systems, not curated datasets. If your model can't handle messy data, you'll find out now instead of after deployment.

3. Nobody Owns the Production System

The data science team builds the pilot. They're excited, they demo it, they move on to the next project. Now who monitors the model in production? Who retrains it when accuracy drifts? Who handles the 3 AM alert when it starts returning errors? Nobody. The model degrades over weeks. Nobody notices until a customer complains.

Fix: Assign a production owner before the pilot starts. Not the data science team. A product owner or engineering lead who will maintain it after the builders leave. If nobody will own it, don't build it.

4. Security and Compliance Got Skipped During the POC

POCs move fast. Nobody stops to ask: Does this model process PII? Where does the data go? Are we sending customer information to a third-party API? Does this meet our compliance requirements? These questions get deferred to "the production phase." Then the production phase arrives, the security team reviews the architecture, and they find that the POC sends unencrypted customer data to an external model endpoint. The rewrite takes longer than the original build.

Fix: Run a 2-hour security review in week 1 of the pilot. Not a full audit. Just answer: What data flows where? What's sensitive? What regulations apply? Surface the blockers early so they don't kill you at the end.

5. The AI Team Leaves and Nobody Can Maintain It

Consultants build the POC. The contract ends. Your internal team looks at the codebase: Jupyter notebooks, undocumented Python scripts, hardcoded credentials, and a model file that nobody knows how to retrain. The system works today. In 6 months, when the data distribution shifts and accuracy drops to 70%, nobody knows how to fix it.

Fix: Require documentation and knowledge transfer as part of the SOW. If the consulting team can't hand off a system your team can maintain, the pilot isn't done. We build every system with handoff in mind: documented code, runbooks, and a training session for the team that inherits it.

6. You Tried to Solve Too Big a Problem

The CEO read an article about AI and wants to "automate the entire customer journey." The AI team tries to build a system that handles lead scoring, personalized outreach, proposal generation, and follow-up scheduling in one pilot. Each of those is a 3-month project on its own. The combined pilot collapses under scope creep.

Fix: One process. One metric. One pilot. If it works, expand. The company that automates invoice processing first, proves ROI, then moves to purchase order matching will ship faster than the company that tries to automate the entire AP department at once.

7. The Process Wasn't Redesigned Around AI

You automate a step in the middle of a manual process. Now the AI classifies the document, but a human still has to download it from email, rename the file, upload it to a folder, trigger the classifier, then manually enter the result into the ERP. You replaced one manual step with an AI step surrounded by five manual steps. The total time savings: negligible.

Fix: Map the entire workflow before automating any part of it. Figure out where the human steps are, which ones the AI replaces, and which ones need to be automated with regular code (file watchers, API integrations, queue systems). The AI model is the engine. You need to build the car around it.

We specialize in taking AI pilots to production. If you've got a POC that works in the demo but not in production, we can usually diagnose the problem in a single call. Tell us what you've built so far.

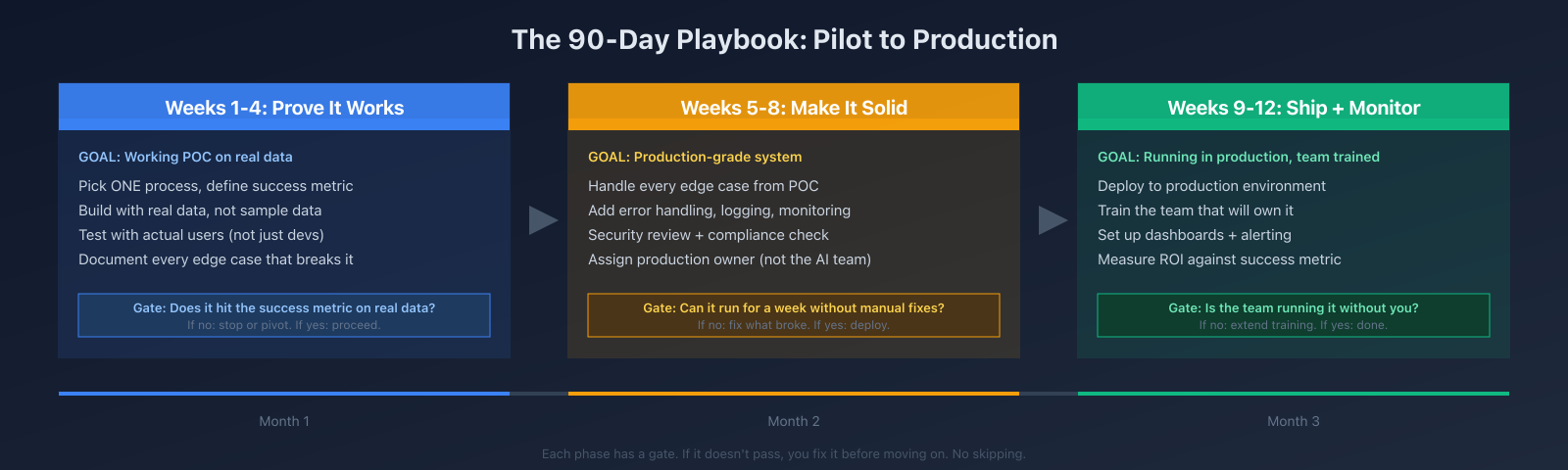

The 90-Day Playbook

Here's the approach we use with clients. 90 days from kickoff to production. Three phases, each with a gate that has to pass before you move on.

Weeks 1-4: Prove it works. Pick one process. Define the success metric. Build a working proof of concept on real production data, not sample data. Test it with the people who will actually use it. Document every edge case that breaks it. At the end of week 4, you have a clear answer: does this work on real data with real users? If not, stop or pivot. If yes, move to hardening.

Weeks 5-8: Make it solid. Take every edge case from the POC and handle it. Add error handling, logging, and monitoring. Run the security and compliance review. Assign the production owner. Build the integration layer that connects the AI to your existing systems. At the end of week 8: can the system run for a full week without anyone manually fixing it? If not, keep hardening. If yes, deploy.

Weeks 9-12: Ship and monitor. Deploy to production. Train the team that will own it. Set up dashboards that track the success metric and alert on anomalies. Measure actual ROI against the metric you defined in week 1. At the end of week 12, the team should be running the system without the builders. If they can't, extend training. If they can, the project is done.

The gates matter. Most failed pilots skip from a working demo straight to "production" without the hardening phase. That's where the 6-month delay comes from. You spend 4 weeks building, then 5 months firefighting issues that would have taken 4 weeks to fix in a structured hardening phase.

Why the $200K Strategy Deck Doesn't Help

We wrote about this in our previous post on AI adoption. Consulting firms sell AI strategy engagements that produce a 60-page PowerPoint deck identifying "opportunity areas" and a "transformation roadmap." The deck costs $150K-$300K. It takes 3-6 months. At the end, you have recommendations but no working software.

The problem with strategy-first: by the time the deck is done, the technology has changed, your business priorities have shifted, and the team that was excited about AI has moved on to other projects. The deck sits in a SharePoint folder. Nobody references it.

The alternative: pick one problem, build a POC in 4 weeks, and prove whether AI solves it. If it works, you have a production system in 90 days. If it doesn't, you spent a fraction of the strategy deck budget and learned something real. Either way, you're further ahead than you'd be with a PowerPoint.

What Good Looks Like

A distribution company came to us after a failed pilot. They'd spent $90K with a different vendor on an AI-powered demand forecasting tool. The model worked on historical data. It failed on real-time orders because their ERP exposed order data in a batch file updated once daily, not in real time. The model was making predictions on yesterday's data.

We rebuilt the data pipeline first: real-time API to the ERP, clean data flowing to the model every 15 minutes. Then we retrained the model on 18 months of actual order data (not the curated sample the previous vendor used). The model hit 82% accuracy in week 3. After hardening, it held 79% accuracy on live data for a full month. The team took ownership in week 10. Total time: 11 weeks. Total cost: about 40% of what the previous vendor charged for the failed attempt.

Same model. The engineering around it made it work.

What to Do If You're Stuck

If you've got a pilot that demoed well but never shipped, the problem is almost always in one of the seven areas above. Start there. Figure out which one (or which combination) killed your project. Most of the time, it's fixable. The AI work isn't wasted. The engineering work around it needs to be done properly.

If you're about to start a pilot, use the 90-day playbook. Define your metric before you write any code. Test on real data from day one. Assign a production owner in the first week. Do the security review early. And keep the scope to one process.